|

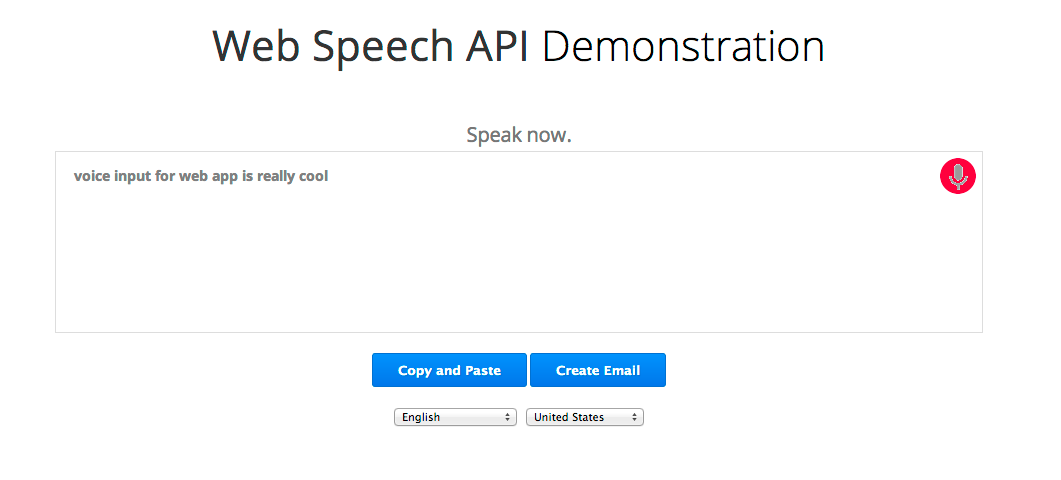

Retrieve the response containing the converted text and display the text in a RecyclerView.Provide the audio recording in an MP3 format to the Speech to Text API.Convert audio recording to MP3 format using the FFMPEG library.Use the MediaRecorder API to make an audio recording of your speech.Make a runtime request in the Activity class for access to the RECORD_AUDIO permission.Request INTERNET and RECORD_AUDIO permissions in the Manifest file.Add the IBM Watson SDK to your Android project with Gradle.Enable the Speech to Text service and obtain your API Key.To use IBM Watson’s Speech to Text service in an Android app you will need to: I have put together a step by step guide on how to accomplish this. I have done some research and created a sample Android app that demonstrates how to integrate with IBM’s Watson Speech to Text service in Android. I experiment with IBM’s Watson Speech to Text service to see how easy it is to integrate with an Android app and the accuracy of the transcription results. I didn't have enough time during this hackathon, but I want to implement parallel requests which will greatly improve the performance.There are a number of different speech to text APIs that exist today. Learned when to rm -rf * and start again (or just slow down) What we learned Finishing the project within 36 hours, so we have a working subtitle generator. Accomplishments that we're proud ofīecoming comfortable with asynchronous programming and node callbacks. I had played a little with parallel requests to the API which greatly improved the speed, but it became hard to manage the code, and in interest of time I abandoned that. Some challenges were Watson's processing speed - incredibly slow, you would have to wait the entire length of the video you want for it to be finished. Later I rewrote the whole app from scratch using a simple approach, eliminating more than half of the code and making it much cleaner. Furthermore, since I was not very experienced with asynchronous programming I fell into "callback hell". I had started using Node.js to write an app but it quickly became more and more complex and messy. Therefore, we first rip the audio from the video using FFmpeg, send it to Watson and wait for a response, and then format the data into a subtitle file (SRT). IBM Watson provides a Speech to Text API that we can input audio clips and get transcripts with approximate timings.

Note: We have to wait for IBM Watson to process the information, and so even though we provide the link immediately it will probably not work if you click on it too early. The user simply provides the interface with a video file, and the application then generates synced subtitles and provides the link for download. AI technology has long been advanced enough to do this, but so far only YouTube has made an effort.

Home videos, podcasts, lectures, and of course the hundreds of ahem perfectly legal movies on your hard drive. These videos are not only limited to movies or TV, but any sort of media that has audio has a potential benefit from subtitles. There are many people who need subtitles for their videos.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed